81 Lines of Merge Conflict. -95% Traffic. Google Has Zero Patience for AI Slop.

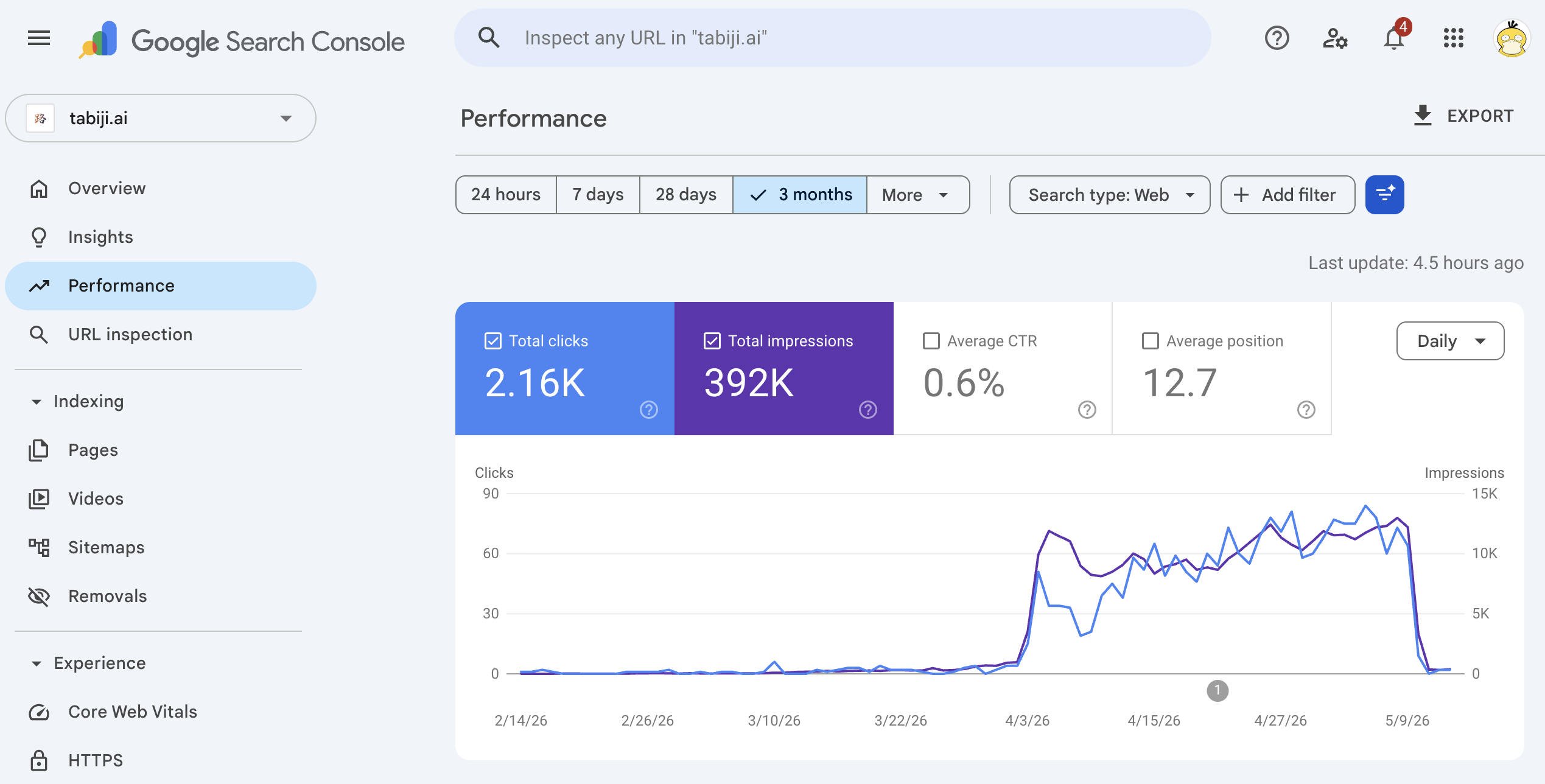

On May 10, 2026, one of my AI agents shipped a PR that left 81 lines of raw git conflict markers — <<<<<<< HEAD, =======, >>>>>>> sha — sitting in plain HTML on tabiji.ai's main scams hub. Four hours later, the broken state was patched. Four days later, Google had cut our organic search traffic by ~95%. We haven't recovered.

An AI agent shipped a merge conflict to tabiji.ai's production HTML for four hours; Google cut our search traffic by 95% in four days and we haven't recovered.

- 81 lines of raw

<<<<<<< HEADrendered to every visitor on /scams/, the site's strongest organic page. - Both clicks and impressions cratered — Google didn't just stop sending traffic, it stopped showing the pages.

- Cleanup uncovered 1,244 broken links, 237 broken images, 32 latent bugs across the site — once Google looked, it found a lot.

AI is going to ship merge conflicts. Build a pre-ship gate before Google decides what kind of publisher you are.

This is a story about a small mistake that turned out not to be small at all.

What broke

Tabiji.ai runs a fleet of AI agents. They write copy, build pages, produce videos, refactor code, ship PRs. Most of the time it works. This time, it didn't.

The forensic record is on GitHub:

- 5/8 — PR #1490: we found and patched merge-conflict residue on the scams hub. Thought we were clear.

- 5/10 02:39 EDT — a subsequent PR landed with fresh residue. 81 conflict marker lines, stacked between the hero stat pills, rendering to every visitor.

- 5/10 06:39 EDT — emergency fix in PR #1513. The broken state was live for roughly four hours.

Four hours. That's it.

What Google saw

On a normal day, the scams hub is one of tabiji's strongest pages. It ranks for hundreds of "[city] scams to avoid" queries and pulls in dozens of daily clicks plus second-order internal traffic.

For four hours, that same URL was rendering literal git conflict syntax in the visible body. To Google's quality classifier, the signal is unambiguous: broken site, low-quality publisher. By the next morning, the click-through curve had bent. Within 96 hours, ~95% of our pre-crash daily clicks were gone.

The more telling line in the chart above is the purple one. Impressions cratered alongside clicks. Google didn't just stop sending traffic — it stopped showing the pages in search results. The line is still flat. There's been no recovery.

What it wasn't

Before pinning this on Google's quality bar, I checked the obvious explanations. None of them held up.

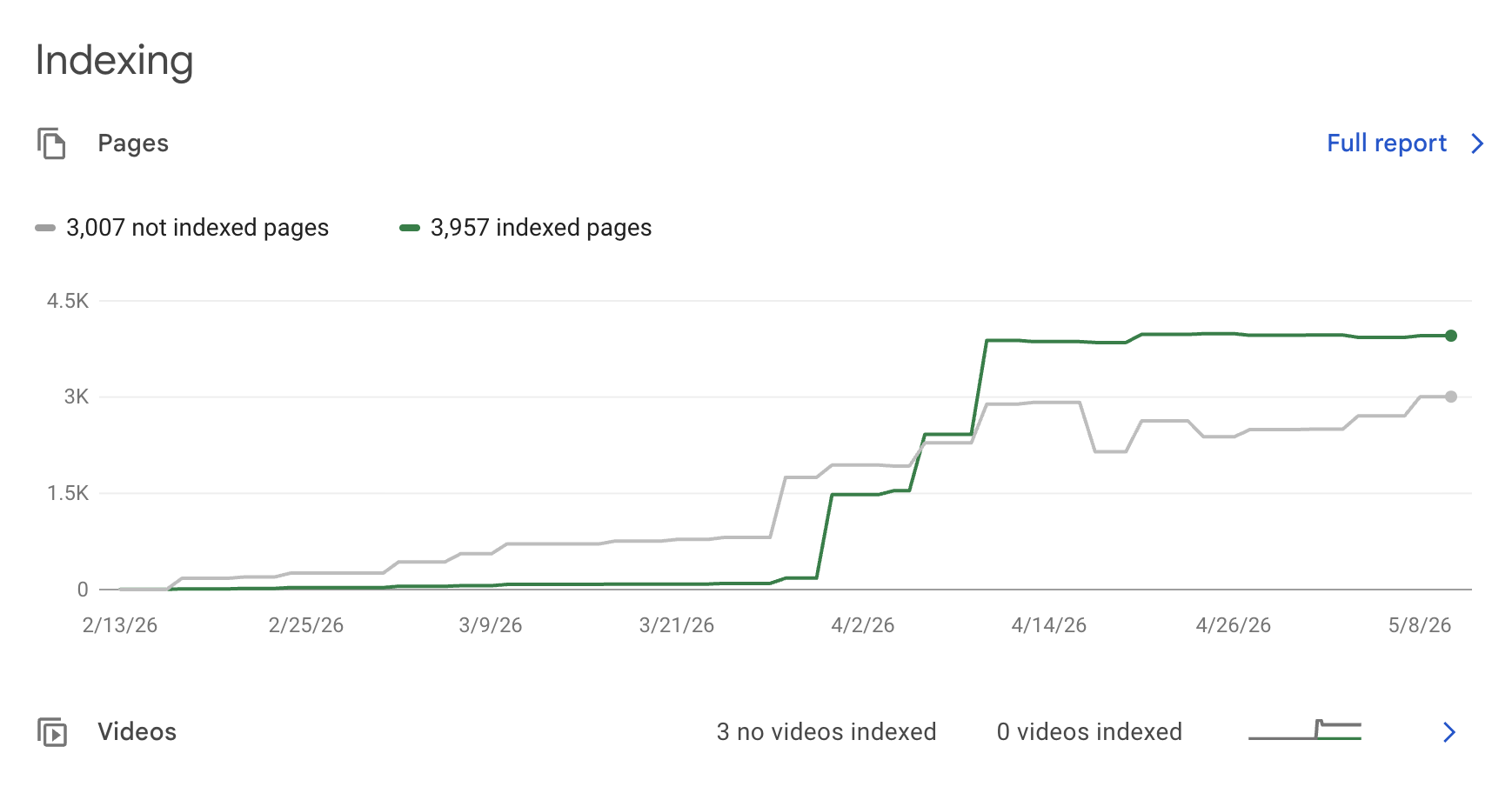

The pages are still indexed. GSC shows 3,957 indexed URLs, line still climbing — not a deindexing event. Google still has the pages; it just stopped showing them.

No security issues. Not a hack. Not a malware flag.

No manual penalty. Nobody at Google manually flagged the site.

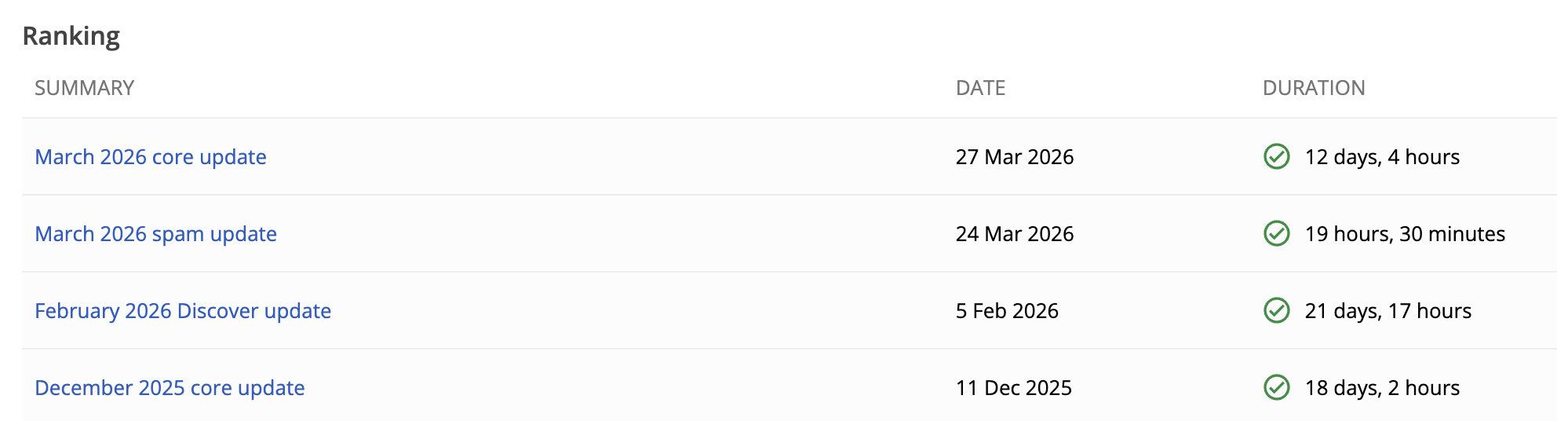

No Google algorithm update during the cliff window. Per Google's own Search Status dashboard, the last announced ranking update finished March 27, 2026 — six weeks before our crash. No core update active, no spam update, no Discover update. The cliff happened in a quiet window.

So: pages still indexed, no penalty, no algorithm shake-up. The cause was internal. The 81 lines of conflict markers fired off a quality signal, and the rest of the site's latent mess gave Google enough corroborating evidence to act on it.

What else was wrong

When we audited what else was broken on the site — partially because we were panicking, partially because if Google had downgraded us we wanted to know exactly what they'd seen — the catalog was ugly:

- PR #1530 — 479 broken hub-card links + 19 empty sections.

- PR #1531 — 1,244 broken internal links across 379 unique targets.

- PR #1535 — 24 latent bugs: entity double-encoding, duplicate DOM IDs, raw markdown leaking into rendered HTML.

- PR #1536 — 8 more: broken HTML, leftover

console.logs, security issues. - PR #1537 — 237 broken CDN image references + 6 missing schema blocks across 121 files.

None of these alone would tank a site. Together, they paint a picture of a publisher who is not being careful. The merge conflict was the loudest offense. Once Google had a reason to look harder, there was a lot to find.

Why Google's bar quietly moved

The internet is being buried under AI-generated garbage. AI-spun listicles. AI review farms. AI sites that publish faster than any human team can — and worse than any human reviewer would. Google has noticed. The quality bar has shifted, especially for sites that look algorithmically generated.

For a human-published site, a busted page is forgiven. People ship bugs sometimes. Whatever.

For an AI-published site, the same bug looks like one more data point in a pattern. "Are you a careful publisher, or are you part of the slop flood?" One broken page can't answer that on its own. Five can. So can one extremely loud one.

The site you're reading this on — Zonted — is fine, because it ships a few human-reviewed posts a week. The site that broke — tabiji.ai — ships hundreds of pages a week, autonomously, with AI. Google evaluates them differently now. I think this is going to be true of every AI-native site this year.

The lesson

If you're running AI agents that ship code to production, you need a pre-ship quality gate. Doesn't matter much which kind:

- A lint check that fails the build on

<<<<<<<patterns (twenty minutes of work). - An audit agent that reviews every PR before merge.

- A staging tier that holds AI-generated builds long enough for a human eye.

- Whatever else fits your stack.

I didn't have one. I now do. But it took 95% of our search traffic to teach me that lesson, and the lesson is still being charged to my account daily because the recovery curve hasn't started yet.

The point isn't that AI shouldn't ship code. The point is: AI is going to ship merge conflicts. AI is going to leave console.logs. AI is going to introduce duplicate IDs and double-encode entities and let markdown leak through into the DOM. That's just true. It will happen.

The question is whether what it shipped gets to production before someone — or some other agent — checks it.

The cost of getting that wrong, in the year Google decided to take AI slop seriously, is higher than I expected.

Newsletter

Get the next post by email.

One email when I publish something new. No spam, no fixed schedule, unsubscribe anytime.

Recommended Reading

- Scaling with AI is Hard because AI is Lazy

Fifty cleanup PRs in five weeks. Fake restaurants, fake subreddits, 4,270 null-island coordinates, 5,096 fabricated Reddit quotes.

- What Is AI Drift?

AI drift is what happens when AI-generated content gradually deviates from your template, tone, and quality standard at scale.

- AEO Is Not SEO — Here's the Playbook

Traffic is declining and it's not coming back. The new game isn't ranking — it's influencing what AI says about you. Here's the AEO…