AI Comics: Do's & Don'ts, 5+ Models Tested

We needed 50 four-panel comics for tabiji’s scam-warning pages. We shipped 733 across eight countries. The pipeline broke in the middle.

- Nano Banana Pro won the five-model showdown for English text rendering and character consistency

- Thailand shipped in 90 seconds with hand-authored scripts; scaling to 8 countries broke at a 9% error rate when a keyword classifier misrouted scams (the Brandenburger Tor petition rendered as a U-Bahn pickpocket)

- V2 rebuild: per-scam Gemini 2.5 Pro synthesis under a strict JSON schema, plus a fail-loud quality gate — 733 comics total, ~$15/country, zero silent fallbacks

Full pipeline, prompts, the postmortem, and seven generalizable lessons for AI-illustration at scale below.

"Make a four-panel comic" is easy. "Make fifty four-panel comics that look like they came from the same illustrator, feature the same recurring cast, render English speech bubbles correctly, and don't drift into generic AI-slop" is a completely different project. The difference is entirely about consistency, and consistency is the thing almost every AI image tool is currently bad at.

Below: the route we took, what worked, what didn't, and the exact recipe — model choices, prompt structure, API endpoints, and gotchas — that got us from "Midjourney keeps writing SARPER TOLT in the speech bubbles" to fifty production comics shipped to tabiji.ai/scams/country/th/ on a Saturday afternoon — and then what silently broke (and what we rebuilt) when we tried to scale that pipeline past a single country to 733 comics across eight of them.

Why comics, and why this is hard

We build tabiji.ai, a travel-safety site. One of the things we publish is per-city scam pages — "the twenty-five scams you'll encounter in Bangkok," broken down with red flags, how-to-avoid tips, and Reddit source links. They're useful. They're also dense walls of text, and we've watched real users bounce when confronted with eight consecutive scam write-ups without a single visual break.

The audience for these pages skews older and slightly female — think the couple planning their first Thailand trip, not the 24-year-old backpacker. That demographic rewards warmth and storytelling over edginess. A cautionary tale rendered as a cozy watercolor comic would do something a sidebar callout can't: communicate the shape of the scam — the friendly stranger, the tuk-tuk, the too-good deal, the regret — in five seconds of scanning.

So the brief was clear: one four-panel comic per scam, 2×2 grid, warm hand-painted watercolor, English speech bubbles that tell the story, a recurring cast of four characters we could rotate across fifty scams without the faces drifting into twenty-five different strangers. Simple to describe. Deceptively hard to produce.

Three things make this hard at scale:

- Text rendering. Most image models mangle English. You get speech bubbles full of plausible-looking gibberish. This is the #1 reason AI comics have never worked.

- Character consistency. Generate the same "silver-haired 60-something woman in a straw hat" twice and you'll get two different women. Now do it fifty times.

- Style drift. Every generation nudges the palette, linework, and paper texture slightly. Over fifty comics that drift becomes visible — the first ten look like one illustrator, the last ten like a different one.

Everything below is how we solved each of those three problems.

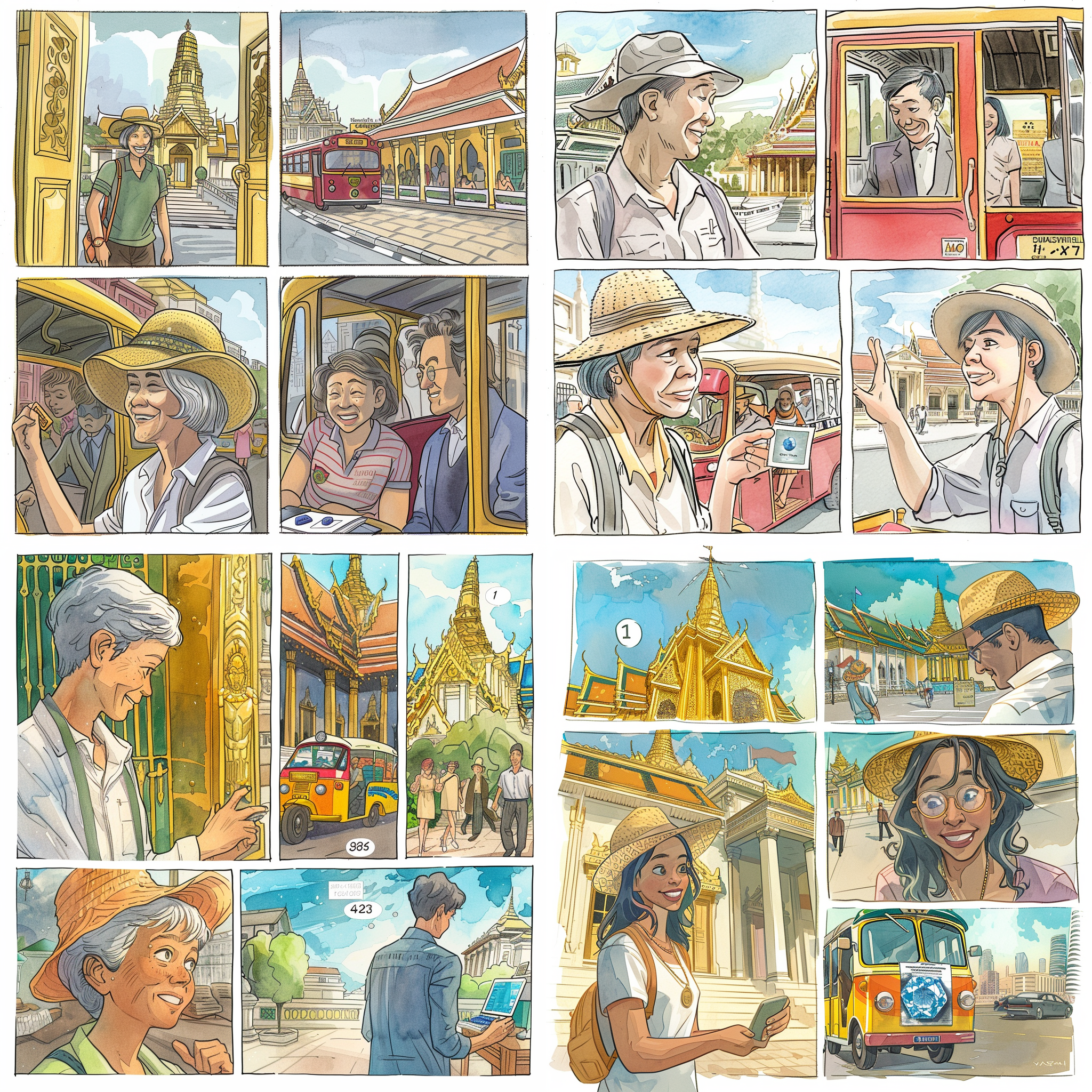

Round 1: Midjourney — good styles, broken text

We started where most people start: Midjourney. Since we were driving it through automation, we used the Apify imageaibot/midjourney-bot actor, which wraps Midjourney's Discord API behind a normal HTTP endpoint. Submit via action=imagine, poll via action=getTask, retrieve the image URL.

We generated the same four-panel Grand Palace scam story in five different styles to find the right aesthetic for our audience:

- Warm watercolor storybook — Beatrix Potter-ish, soft pastels

- Hergé / Tintin ligne claire — clean black outlines, bright flats

- New Yorker editorial cartoon — refined ink with watercolor wash

- Studio Ghibli / Miyazaki — warm painterly anime

- Mid-century travel poster — bold flat color blocks, 1950s screen-print texture

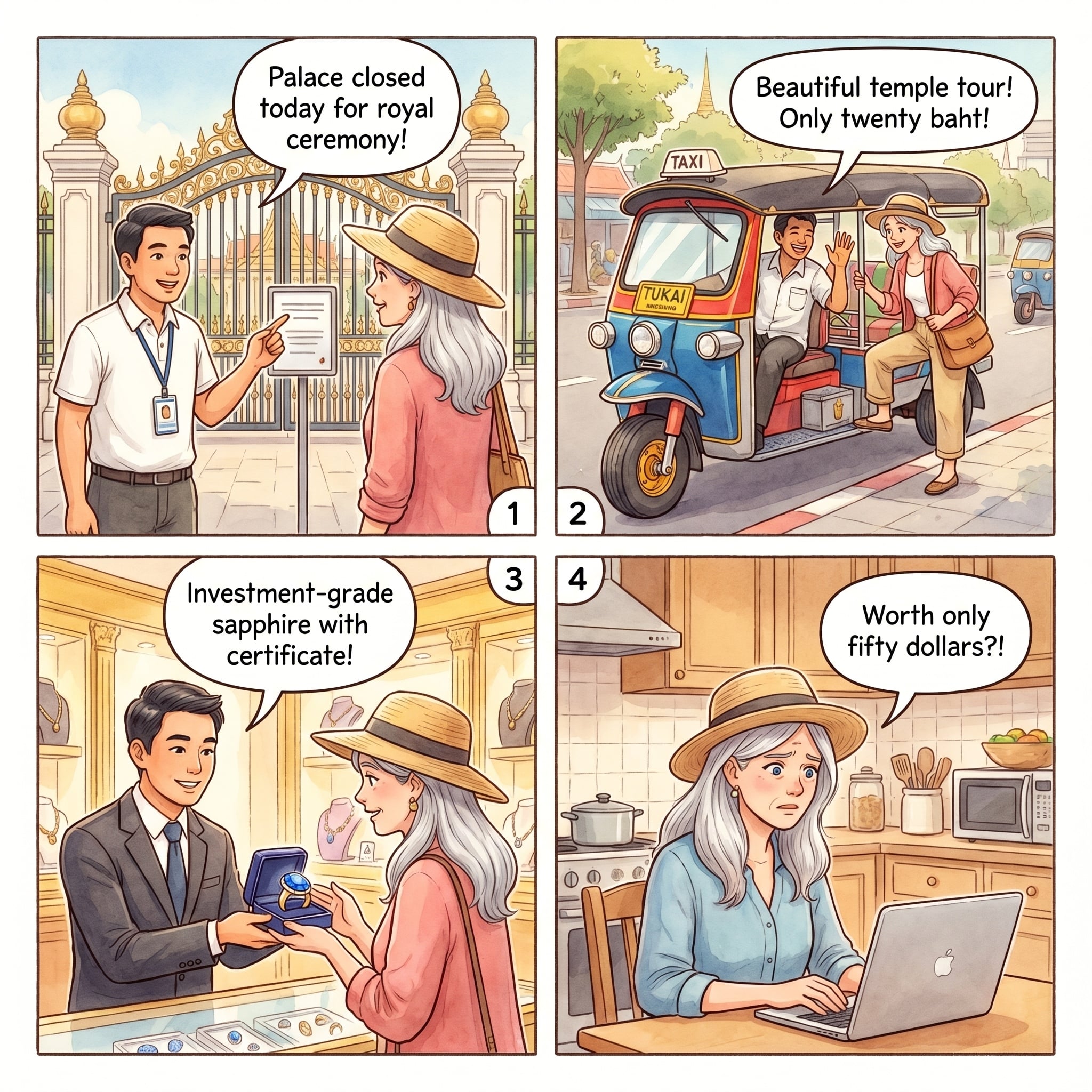

Each prompt described the same 4-panel story (palace "closed" → tuk-tuk → gem shop → regret at home) so we could compare styles apples-to-apples. Here's what Midjourney returned for the watercolor version — what we picked as the winning aesthetic:

The watercolor look was right: warm, unthreatening, legible faces, a silver-haired protagonist that read as ~60. The four other styles had their moments — the Tintin had stronger architecture, the New Yorker had the most sophisticated faces — but they all skewed more masculine-adventure or more editorial-arch than our audience wanted.

Style locked. Now add the speech bubbles.

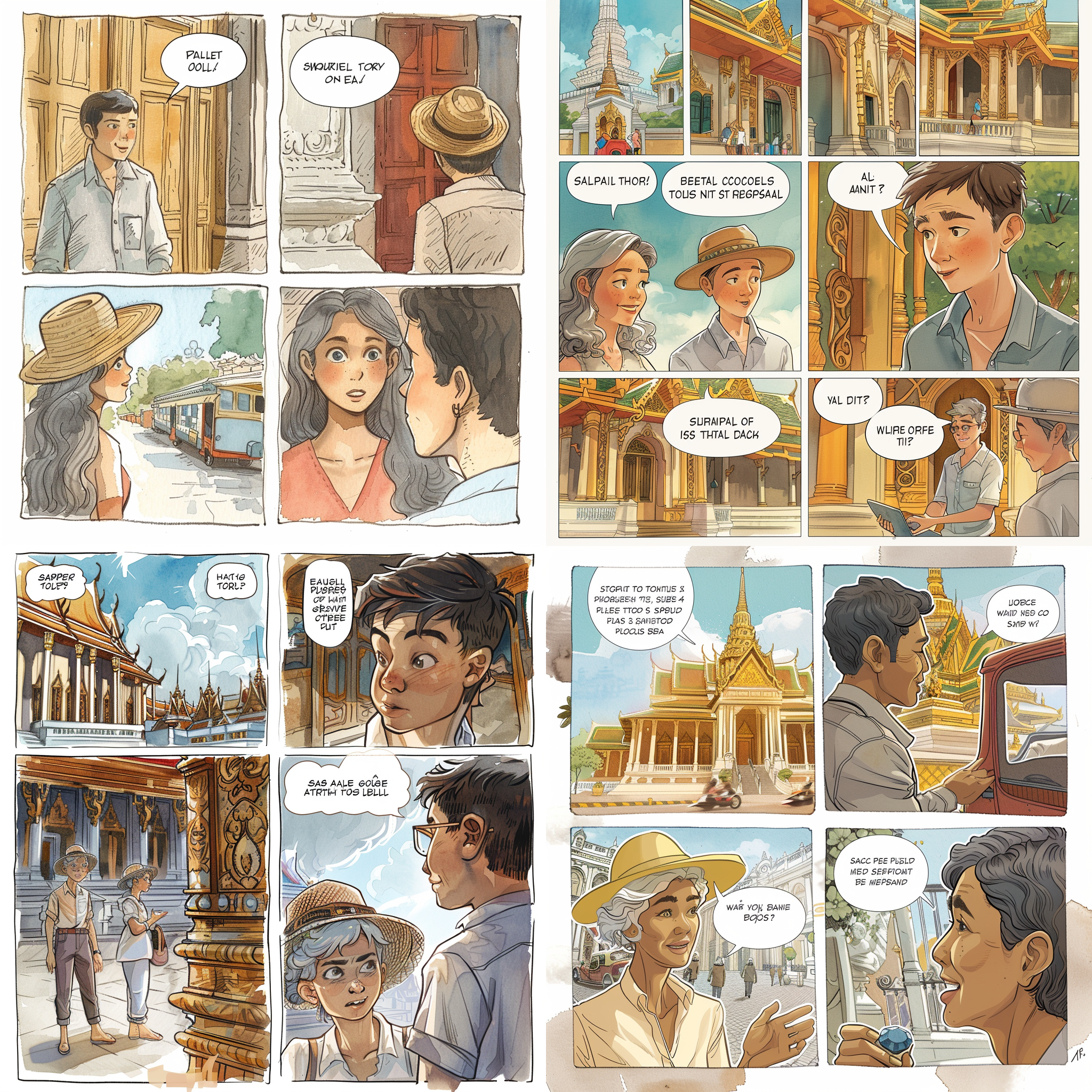

The thing Midjourney cannot do

We rewrote the prompt with explicit speech bubble content: "Speech bubble reads: PALACE CLOSED TODAY FOR ROYAL CEREMONY!" and so on for each panel. This is what Midjourney returned:

This is the fundamental Midjourney limitation for comics. It is excellent at the look of text — weight, kerning, bubble shape, speech tails. It just can't reliably spell what you asked for. Post-v6 models have improved on short logos and signs, but custom dialogue longer than a few words is still a coin flip. For our fifty-comic project, that was fatal. We could have generated the art in Midjourney and composited bubbles in Photoshop, but that's ~200 manual touch-ups, and fifty comics across a cast of four characters also demands the next thing Midjourney is bad at: character consistency at scale.

So we moved the entire pipeline to Wavespeed, which gives us unified API access to a bunch of current image models under one billing account. Time to shop.

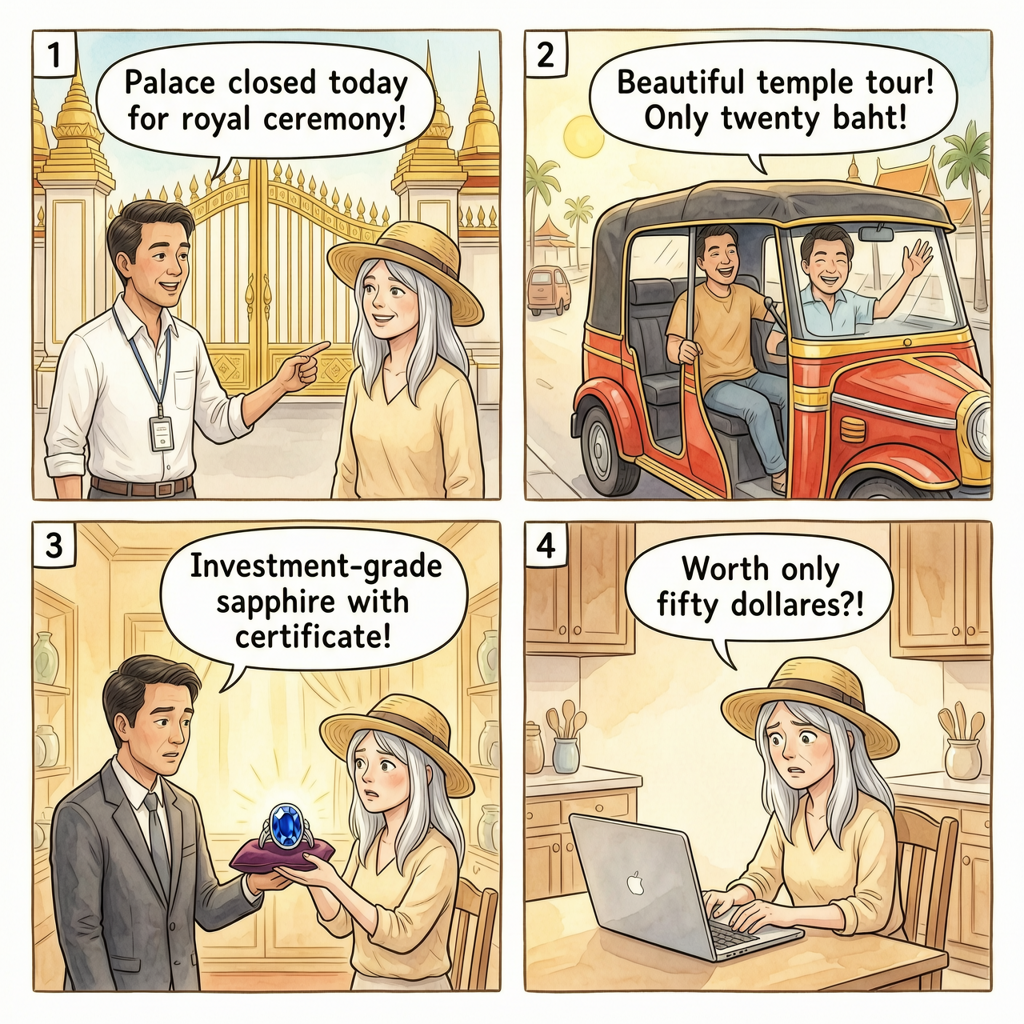

Round 2: Wavespeed four-model showdown

We fed the same watercolor-storybook comic prompt — complete with explicit speech bubbles — into four different current models, all via Wavespeed's HTTP API:

- Seedream v5 Lite (ByteDance) —

bytedance/seedream-v5.0-lite - Wan 2.7 Pro (Alibaba) —

alibaba/wan-2.7/text-to-image-pro - Qwen Image 2.0 (Alibaba) —

wavespeed-ai/qwen-image-2.0/text-to-image - Nano Banana Pro (Google Gemini 2.5 Flash Image) —

google/nano-banana-pro/text-to-image

Each one got a POST /api/v3/{model_id} with {"prompt": ..., "aspect_ratio": "1:1"}. Results polled via GET /api/v3/predictions/{id}/result. All four submitted in parallel. All four came back in under 90 seconds. Here's what they produced:

Seedream v5 Lite ($0.035/image)

Wan 2.7 Pro ($0.075/image)

Qwen Image 2.0 ($0.03/image)

Nano Banana Pro ($0.14/image)

The Verdict

Nano Banana Pro wins decisively for any comic project that needs English text in speech bubbles. It's 4× the price of Seedream and 4.7× Qwen, but the English fidelity and character consistency mean zero manual touch-ups — which at fifty-comic scale is a much bigger cost than the ten-cent-per-image delta.

Google's Gemini 3 Pro Image (what Wavespeed exposes as Nano Banana Pro) is doing something structurally different than the other three. It's a native multimodal transformer, not a diffusion model with a text-encoder stapled on. That architectural difference is why it can generate legible, correctly-spelled text inside images — it's treating the text regions as text, not as "text-shaped pixels." Every other model in this test is a diffusion model guessing at letterforms. That's also why Nano Banana Pro is the clear leader for logos, signage, and any prompt where you specified the exact string.

The three consistency locks

Picking the right model solves text rendering. It does not solve character consistency. That's the harder problem, and it's where most AI comic projects fall apart — your protagonist morphs slightly every generation, and over fifty panels the series feels like fifty strangers wearing similar costumes.

Here's the three-layer lock we settled on, in order of impact:

1. A locked style block

One reusable paragraph describing the visual style, pasted verbatim at the top of every generation. Zero variation between scams. The Thailand block we used:

A single illustrated comic book page in warm soft watercolor

storybook style, showing four sequential panels arranged in a 2x2

grid with small numbers 1, 2, 3, 4 in the upper-left corner of

each panel, separated by thin clean white gutters. Hand-painted

watercolor textures with visible paper grain, muted pastel palette

warmed by golden Thai sunlight, gentle expressive faces with soft

pencil linework, delicate shadows, unhurried storybook pacing.

Each panel contains one clean white rounded speech bubble with a

small pointer tail, holding short printed English dialogue in

simple black lettering — text must be legible and correctly

spelled. Square 1:1 composition, 2K resolution.This is ~100 words that never change. Every word does work: "warm soft watercolor storybook" (not just "watercolor"), "visible paper grain" (prevents the model from going glossy), "muted pastel palette warmed by golden Thai sunlight" (nudges the palette, locks regional feel), "text must be legible and correctly spelled" (this is a real-enough prompt hint — Gemini responds to it).

2. Canonical character sheets

Four protagonists, one reusable paragraph each, pasted verbatim as the CHARACTER: block of every prompt. They rotate across scams by scam type: trust-based scams go to the trusting older woman; transit scams go to the savvy 30-something who pushes back; charm/map scams go to the affable older man; nightlife scams go to the young curious traveler.

Here's one of them — Margie, the headline protagonist:

A 62-year-old Western woman with shoulder-length silver-gray hair

worn under a woven straw sun hat with a cream ribbon, warm blue

eyes behind tortoiseshell reading glasses often perched on her

head, light olive complexion with gentle laugh lines and a

friendly curious expression. She wears a cream linen blouse, tan

wide-leg travel pants, and white canvas sneakers, with a small

tan leather crossbody bag and a coral scarf. Gracious, cheerful,

a little too trusting.A few rules that matter:

- Signature visual anchors. Straw hat with cream ribbon, coral scarf, tan crossbody bag. These are what the model latches onto. "Silver hair" alone isn't enough — the hat is the lock.

- Skin tone and features should be concrete. "Light olive complexion with gentle laugh lines" renders more reliably than "friendly older woman."

- A personality clause. "Gracious, cheerful, a little too trusting" — this influences expressions across panels. You'll get more consistent warm smiles in panel 1 and a believable worried look in panel 4.

- Never paraphrase. Paste the paragraph exactly. Changing one adjective between generations creates detectable drift.

The cast — Margie 62F, Priya 34F, Harry 64M, Marcus 34M — is deliberately balanced 2×2 on age and gender, with mixed ethnicity for representation and audience identification. Margie gets the most scams because she matches the demo anchor, but every character shows up enough that the series has variety.

3. Reference images (the big lock)

This is the biggest consistency lever, and the one most people miss. Once you have one or two generations you're happy with, feed them back in as reference images on every subsequent generation. Nano Banana Pro on Wavespeed exposes this through the edit endpoint:

POST https://api.wavespeed.ai/api/v3/google/nano-banana-pro/edit

{

"prompt": "<style block>\n\nCHARACTER: <Priya paragraph>\n\nSCENE:\nPanel 1: ...\nPanel 2: ...",

"images": [

"https://img.tabiji.ai/scams/bangkok/scam-1.jpg",

"https://img.tabiji.ai/scams/bangkok/scam-2.jpg",

"https://img.tabiji.ai/scams/bangkok/scam-4.jpg"

],

"aspect_ratio": "1:1",

"output_format": "jpeg"

}The images array is the lock. Pass in 2–3 prior comics and Gemini uses them as style anchors — it matches the palette, the linework, the paper grain, and (in our case) even the way speech bubbles are rendered. It does not reuse the characters from the references — because the CHARACTER: block in your prompt explicitly describes a different protagonist.

You need to be explicit about this separation in your prompt, though. We ended the style block with:

Match the watercolor palette, linework, paper texture, and

lettering style of the reference images exactly; the protagonist

must be the NEW character described in CHARACTER below — do not

reuse characters from the reference images.Without that clause, Gemini will sometimes put one of the reference-image characters into the new scene. With it, the style carries over and the character stays new. This one paragraph is what made our fifty-comic scale run work.

The edit-multi gotcha

Wavespeed exposes two reference-image endpoints for Nano Banana Pro: edit and edit-multi. We burned a test generation figuring this out so you don't have to: only edit supports aspect_ratio: "1:1". If your comic is square, edit-multi will reject the request with "value is not one of the allowed values [3:2, 2:3, 3:4, 4:3]". Use edit. Its images array accepts multiple references just fine.

Scaling to 50 comics

Once the recipe was locked, Thailand's scaling was mechanical — and hand-authored. We extracted every scam title + location + first-paragraph summary from the existing HTML of nine Thai city pages (Bangkok, Chiang Mai, Chiang Rai, Koh Phangan, Koh Samui, Krabi, Pai, Pattaya, Phuket), then wrote a Python dict mapping each (city, scam number) to (character, four-panel script):

SCENES = {

("chiang-mai", 5, "margie"): panels(

("Margie looks at a colorful Chiang Mai flyer advertising "

"'Ethical Elephant Sanctuary — Bathing & Riding' with happy "

"elephant photos.",

"Ethical elephant sanctuary — sounds lovely!"),

("Margie arrives at a dusty roadside camp with chained elephants "

"and tourists taking rides, shocked expression.",

"These elephants look unhappy!"),

("A staff member at the camp shrugs dismissively; another tourist "

"climbs on for a ride.",

"All elephants like rides here!"),

("Margie at a real ethical sanctuary later, watching elephants "

"bathing freely in a river, smiling.",

"Real sanctuaries don't chain elephants!"),

),

# ... 41 more

}For each scam, the builder pastes the locked style block + the correct character paragraph + the four-panel scene into one prompt. All 42 bodies get written to /tmp/th_*.json, each referencing the same three Bangkok comics as style anchors.

Then it's a single parallel burst:

for f in /tmp/th_*.json; do

(curl -s -X POST \

-H "Authorization: Bearer $WS_KEY" \

-H "Content-Type: application/json" \

-d @"$f" \

"https://api.wavespeed.ai/api/v3/google/nano-banana-pro/edit" \

| jq -r '.data.id' > "$(dirname "$f")/$(basename "$f" .json).id"

) &

done

waitAll 42 submitted in under three seconds. The polling loop ran in parallel too (6-second poll interval per job), so wall time was capped by the slowest single generation — which turned out to be ~90 seconds. Forty-two production-quality comics produced in about a minute and a half.

This worked cleanly for one country. Fifty comics, hand-authored scripts, watercolor style locked, shipped in an afternoon. It did not survive scaling to eight countries — which turned out to be the most important lesson of the whole project.

The negative-cache gotcha

One last production detail that ate me for twenty minutes: if you HEAD-check an image URL before it exists (we were verifying the R2 path structure before uploading), Cloudflare will cache the 404 for a minute or two. Later requests — even after you've successfully uploaded the file — will keep getting 404s until the negative cache expires.

Workarounds, in order of preference:

- Just wait 60–120 seconds. The negative cache is short.

- Append

?v=1to the URL in your HTML — instant cache bust. - Use Cloudflare's zone-scoped API token to call

purge_cache. Our R2-scoped token couldn't do this, which is exactly the limitation you want in production, but annoying during development.

Also: Cloudflare's WAF returns 403 to requests with the default Python-urllib/3.x User-Agent. Verify your URLs with a browser UA in curl or requests, or you'll chase a ghost.

Cost, time, and the final recipe

Full project cost, generator-side only (we're not counting our time):

| Stage | Model | Calls | Unit | Total |

|---|---|---|---|---|

| Style exploration | Midjourney (Apify) | 7 | ~$0.25 | ~$1.75 |

| Model showdown | Seedream v5 Lite | 1 | $0.035 | $0.04 |

| Model showdown | Wan 2.7 Pro | 1 | $0.075 | $0.08 |

| Model showdown | Qwen Image 2.0 | 1 | $0.03 | $0.03 |

| Production | Nano Banana Pro (text-to-image) | 2 | $0.14 | $0.28 |

| Production | Nano Banana Pro (edit with refs) |

48 | $0.14 | $6.72 |

| Total | 60 | ~$8.90 |

Under nine dollars for fifty production comics across nine city pages. That's seventeen cents per comic, all-in, including every exploration generation and every false start. A freelance illustrator delivering the same in watercolor would be $200–400 per comic, easily six weeks of turnaround on a fifty-comic series. This isn't a subtle productivity difference.

At multi-country scale the math shifts slightly. Each comic gets a Gemini 2.5 Pro synthesis call (~$0.01–0.05) on top of the Nano Banana Pro render — more on why in a minute. That nudges per-country cost from ~$10 to ~$15. Across eight countries and 733 comics, the total has been about $110. Still multiple orders of magnitude below the freelance baseline.

The final recipe, one screen

If you want to reproduce this for your own content project, here's the distilled version:

- Pick the style on a cheap model first. Generate 3–5 aesthetic variants at low cost before committing. Midjourney is great for style exploration because it takes creative risks; we just didn't ship it.

- Use Nano Banana Pro for production whenever you need English text rendered inside the image. Via Wavespeed, endpoint

google/nano-banana-pro/edit, 1:1 aspect ratio. - Write one locked style block. ~100 words, specific and non-negotiable. Paste it verbatim at the top of every prompt.

- Write canonical character sheets. One per recurring protagonist. Include visual anchors (hat, bag, clothing color). Paste verbatim.

- Anchor with reference images. Your first generation or two (from the same style + same character recipe) becomes the style lock for everything that follows. Pass them via the

imagesarray. - Be explicit about character substitution. Tell the model to use the reference images for style only and the NEW character described in your prompt.

- Batch and parallelize. The Wavespeed API handles 40+ concurrent requests without rate-limit issues. Wall time is capped by the slowest single generation.

- Synthesize each script with an LLM, not a keyword classifier. Past a single batch, ask Gemini 2.5 Pro (or Claude, or GPT-4) with a strict

responseSchemato write each four-panel prompt from the source content. Routing to pre-written templates by keyword-match will silently mis-categorize ambiguous inputs — the specifics of why are below. - Fail loud, not soft. If a generation fails twice, flag for manual review. Never silently substitute a template, never reuse an old version. A missing comic beats a wrong comic every time.

Scaling past Thailand: what silently broke

The Thailand pipeline worked because I hand-wrote all 42 four-panel scripts in Python. At 50 comics, that's an afternoon of work. At 733 comics across 130+ cities, it isn't.

So the first scale-up swap was to route each scam to a pre-written template based on keywords in the title. Extract scam titles from HTML, classify into a template (pickpocket, petition, fake-police, bracelet, etc.), fill in {city} and {landmark} placeholders, generate. It was fast — Germany's 88 comics shipped in about an hour.

It was also quietly, catastrophically wrong.

The cascade looked like this:

PATTERNS = [

(r"shell game|three.?card", t_shell_game),

(r"pickpocket|u-bahn|s-bahn|metro", t_pickpocket), # <-- landmine

(r"fake police|polizei|document", t_fake_police),

(r"petition|deaf|clipboard", t_petition),

# ...30 more patterns...

(r".*", t_pickpocket), # fallback

]

def classify(title):

for pattern, template in PATTERNS:

if re.search(pattern, title.lower()):

return templateBerlin scam #3 was titled "Brandenburger Tor Petition & Bracelet Pickpocket Distraction" — a charity-worker at the Brandenburg Gate hands you a clipboard while accomplices slip a woven bracelet onto your wrist and lift your wallet. The classifier matched "pickpocket" first, routed to the generic-transit-pickpocket template, and generated a comic of Priya on a U-Bahn.

Beautiful comic. Beautiful watercolor. Completely wrong scam.

Eight of 88 Germany comics were mis-routed this way — a 9% error rate on a single country. I didn't notice because three quiet failures stacked:

- The API returned

status: completedon every generation. - The programmatic quality gate — valid JPEG header, file size ≥ 120 KB — passed every one.

- Nothing in the image itself says "this is wrong" unless you read it alongside the scam title.

I spot-checked six comics at deploy. All six happened to be correctly routed. Confidence high, shipping green. A human reviewer found the bug by scrolling through the live Berlin page and going "why is there a subway in the Brandenburger Tor scam?"

Why first-match cascades are structurally unsafe

Reordering the patterns doesn't fix this. Any title with overlapping mechanics lands badly somewhere in a first-match cascade, no matter how you order it:

- "Lucky Face" Indian Hair Supplement & Fortune Teller — fortune teller, hair supplement, or lucky face?

- Patpong Ping Pong Show Drink Bill — nightlife, bill shock, or ping-pong?

- Fake Elephant Sanctuary & Tiger Petting Trap — elephant ethics or animal welfare?

Every new template you add introduces new keyword collisions with the existing set. It's an additive-complexity trap.

And the deeper issue: a pre-indexed template library can't encode this specific scam. Even if "bracelet" had matched first, the bracelet template has a hardcoded script — "lucky bracelet tied on wrist, twenty euros demanded, cut off with pocketknife." It doesn't know this is the Brandenburger Tor variant with "deaf children" as the distraction layer. Right category, wrong details. The comic is in the neighborhood, and in this business "in the neighborhood" is worse than silence.

V2: Gemini writes the script

The fix was to stop routing scams to pre-written templates and start writing a bespoke four-panel script for every single scam.

For each scam, the v2 pipeline:

- Extracts title, location, and the first one or two story paragraphs from the HTML.

- Sends it to Gemini 2.5 Pro with a system prompt describing the four-member cast and their scam-fit pairings, the specific scam's content, and a strict JSON response schema — enforced server-side via the

responseSchemaparameter, not a "please return JSON" prayer:

RESPONSE_SCHEMA = {

"type": "object",

"properties": {

"character": {"type": "string", "enum": ["margie", "priya", "harry", "marcus"]},

"character_reason": {"type": "string"},

"panels": {

"type": "array", "minItems": 4, "maxItems": 4,

"items": {

"type": "object",

"properties": {

"scene": {"type": "string"},

"dialogue": {"type": "string"},

},

"required": ["scene", "dialogue"],

},

},

},

"required": ["character", "character_reason", "panels"],

}Gemini returns a four-panel script tailored to that exact scam. The pipeline then assembles STYLE + CHARACTER + SCENE as before and fires Nano Banana Pro.

Here's what Gemini writes for the Brandenburger Tor Petition scam:

{

"character": "margie",

"character_reason": "This scam preys on politeness and a desire

to help a fake charity, which is Margie's key vulnerability.",

"panels": [

{"scene": "Margie stands smiling before Berlin's Brandenburg

Gate. A young woman approaches, holding a clipboard with a

sign that reads 'HELP FOR DEAF CHILDREN'.",

"dialogue": "A signature for the children?"},

{"scene": "Margie focuses on signing. An accomplice's hand

slips a woven bracelet onto her wrist while a third hand

lifts the wallet from her purse.",

"dialogue": "Of course, I'm happy to help."},

{"scene": "The petition-holder is gone. The accomplice

aggressively points to the bracelet on Margie's arm.

Margie looks at her empty, open purse in shock.",

"dialogue": "The clipboard is just a distraction."},

{"scene": "Margie speaks to a German Polizei officer. In

the background, other tourists are being approached by a

similar clipboard group.",

"dialogue": "Lesson learned."}

]

}The resulting comic shows the clipboard, the bracelet, the pickpocket, and the Polizei report. Brandenburg Gate as backdrop. Panel 3's bubble literally names the scam mechanic. It's a comic that actually matches this specific scam.

Fail loud, not soft

V1 had a silent fallback: if a generation failed, substitute a generic template and keep going. That masked routing errors on top of everything else.

V2's contract: if a comic makes it into the output folder, it was generated specifically for this scam. No silent substitutions, ever.

- First failure → auto-retry once via the

/text-to-imageendpoint (Nano Banana Pro's/editendpoint's content filter is slightly stricter; this recovers most filter trips). - Second failure → flag for manual review. Write the scam to a flagged log. Do not substitute. Do not reuse an old version.

A missing comic is vastly better than a wrong comic. The reviewer sees the flag, regenerates manually, and the output is always known-good.

The cast-distribution tell

V1 and V2 disagree sharply about which character should appear in German scams, and the shape of that disagreement is diagnostic:

| Germany cast distribution (88 comics) | V1 | V2 |

|---|---|---|

| Margie (trusting 62-year-old) | 10 | 42 |

| Priya (savvy 34-year-old) | 68 | 28 |

| Harry (charmed 64-year-old) | 6 | 17 |

| Marcus (curious 34-year-old) | 4 | 1 |

V1's generic-pickpocket fallback swept up every unmatched scam and handed it to Priya — she's the savvy-skeptic-on-transit protagonist, perfect for pickpockets, badly miscast for clipboard petitions. V2 picks character based on actual scam mechanics, and Margie (the politeness-target) correctly dominates because German scams skew heavily toward trust-based mechanics — fake charity, restaurant overcharge, fake officials. The 10 → 42 Margie shift isn't a reshuffle. It's v1's systematic misrouting becoming visible in aggregate.

Seven lessons for AI-illustration pipelines

If you're building something like this at any scale past a single batch, these are the things our 733-comic year cost us to learn:

- Keyword classifiers fail silently on ambiguous input. First-match priority cascades are deceptively simple and dangerous. Every new category introduces collisions with existing ones. You will not notice until a human reviewer spots a mismatch.

- Content specificity beats style quality. A beautifully-rendered comic that's wrong about the subject is worse than a sketchy one that's right. AI image models will keep getting prettier. The specificity problem is upstream of that, in how you route source content to a prompt.

- Per-item bespoke synthesis is worth the ~$0.05. Adding an LLM step to write each prompt is rounding error against the cost of reprinting a book or losing credibility. Speed-vs-quality inverts the moment output is customer-visible.

- Structured JSON output is non-negotiable. Gemini, Claude, GPT-4 all support response schemas. Use them. Don't parse "here is the JSON you requested" — it will break on the one comic where the model decides to add a preamble.

- Silent fallback is a bug, not a feature. If your pipeline can't produce a valid output for an item, it should refuse to produce any output for that item. Flag and surface. "We generated 88 comics" should mean exactly that, not "we generated 80 comics + 8 templates."

- File-size and format checks catch ~90% of API failures and 0% of semantic failures. A comic that rendered a subway instead of a clipboard will pass every programmatic check. You cannot automate your way out of needing a semantic-correctness pass — but you can push correctness upstream by making the LLM explicitly name the mechanic in the script it writes.

- When it breaks, regenerate — don't patch. The temptation to "fix the classifier and re-run only the affected comics" is real but hollow. Rebuilding the whole country's set with v2 also gave us a higher baseline for every comic, not just the broken ones. ~$15 and one hour per country. Cheap.

What's next

Three things still on the list:

- A semantic-correctness pass. File-size and JPEG-header checks pass wrong comics. An LLM-as-judge — hand the generated image and the scam text to Gemini and ask "does this comic show X?" — would catch semantic drift that v2's upstream scripting can't fully prevent.

- Richer pose cues in character sheets. Characters occasionally drift when they have to do something unusual — lie on a beach, climb stairs, ride a tuk-tuk. A "typical poses" subsection per character would tighten this.

- Per-scam dialogue length discipline. Anything over ~8 words per bubble still occasionally mis-spells, even on Nano Banana Pro. Keep bubbles short. It's better comic-writing anyway.

Tools referenced in this post

- Wavespeed — unified API access to 1000+ AI models including all four we tested, under one billing account. Pricing at wavespeed.ai/pricing. This is the single highest-leverage tool in this pipeline.

- Gemini 2.5 Pro — the LLM we use for per-scam script synthesis in v2. The key feature is the

responseSchemaparameter, which server-side enforces a JSON shape on every response. Claude's and OpenAI's equivalents work just as well; pick your poison. - Apify — the

imageaibot/midjourney-botactor we used for Midjourney automation via Discord. - Claude Code — the agentic coding environment we wrote and ran this entire pipeline through. Wrote the prompts, fired the Wavespeed calls, uploaded to R2, injected into HTML, ran the git workflow, merged the PR. One conversation, under two hours of wall time, fifty comics shipped. Then, a few weeks later, one more conversation to rebuild v2 after the scale-up failure.

If you want to see all 733 comics in production, the country hubs are at Thailand, France, Germany, Greece, Spain, China, Indonesia, and Canada. Click any city to see the comics in context — and each country has its own locked visual style.

Newsletter

Get the next post by email.

One email when I publish something new. No spam, no fixed schedule, unsubscribe anytime.

Recommended Reading

- AI Image Generation: 5 Models, 26 Real Results

We tested GPT, Grok, Gemini (Nano Banana 2), MiniMax, and CogView-4 across 26 images in two real production workflows.

- Nano Banana 2 vs Grok for Concept Art

We tested Nano Banana 2 (Gemini 3.1 Flash) against Grok (xAI) on 8 identical lofi anime art prompts.

- Wavespeed Review: The Best AI Video API

Hands-on review of Wavespeed — the AI inference platform with 1,000+ models, pay-per-use pricing, and a unified API.