OpenClaw vs Claude Code: I Choose Freedom

The Claude Max-plan token buffet is subsidized. The signs say it’s closing.

- 15-routine daily cap — hit at 2:36 AM

- A $137 API run on tabiji content = ~9× subsidy vs the Max-plan equivalent

- Hidden peak-hour token burn + chunky 90-day uptime bars on Anthropic’s own status page

So my recurring crons run on OpenClaw with Z.AI/GLM, MiniMax, and 19 other models in rotation. Claude Code stays for one-offs.

The 7 AM routine pause

I checked my phone over coffee and found a Claude email sent at 2:36 AM:

I run three Claude Max plans, one Codex Pro, plus subscriptions to MiniMax and Z.AI/GLM. I am, by any reasonable definition, the kind of customer Anthropic should want to keep happy. And the routine that had stopped — scam-narrative-rewrite-batch — was doing exactly the kind of overnight, scheduled, non-interactive work that the idea of a Max plan is supposed to enable.

It got capped at 15. The cap was the soft signal I’d been waiting for.

The math the buffet hides

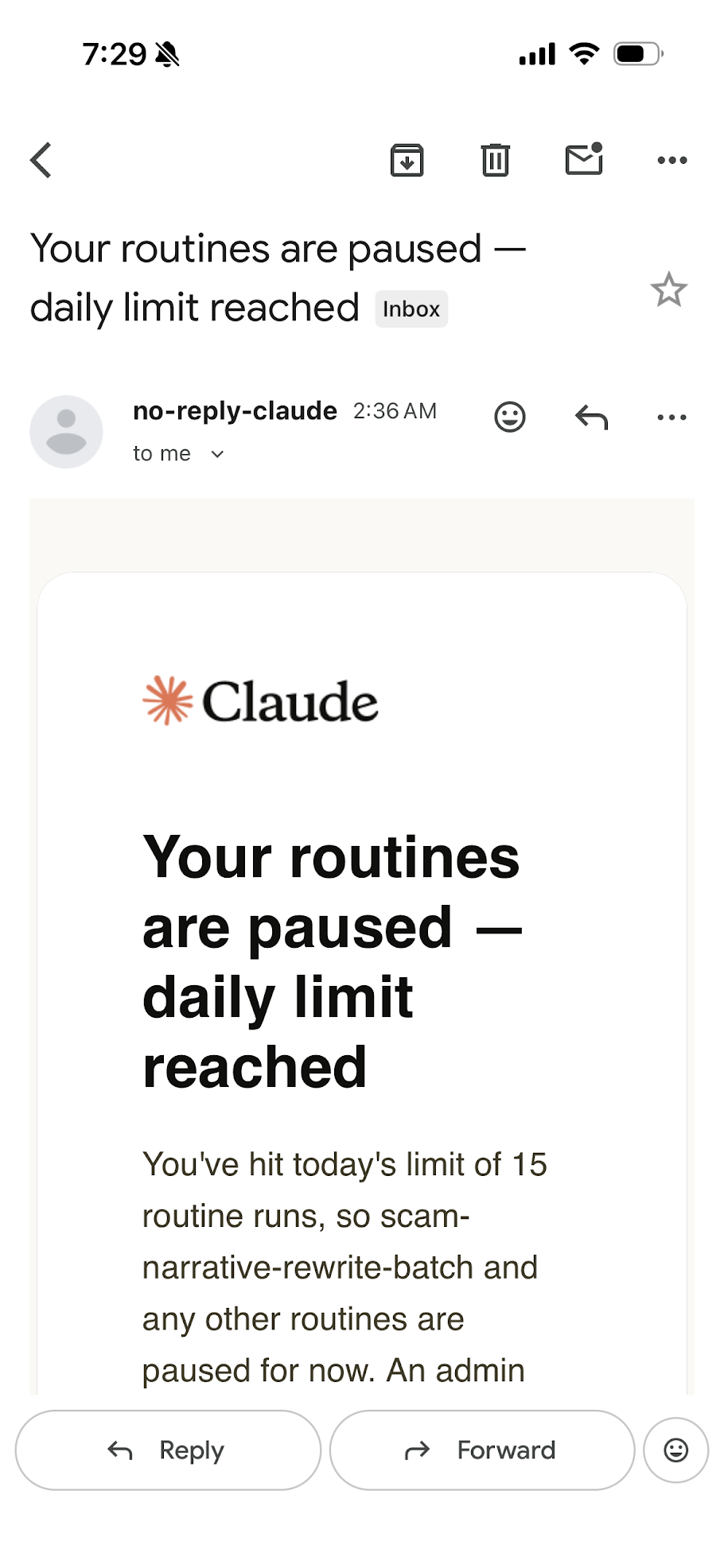

Last week I ran a five-city PR through the tabiji.ai content pipeline against the Anthropic API directly. The cost summary at the end:

$137.39 for five cities. ~$25 per city steady-state. The full 224-page drain comes in around $5,500.

Now compare that to what I actually pay: roughly $600/month for three Max plans plus another ~$200 for Codex Pro. ~$800/month, and most months I generate more token equivalent than that 224-page run. The implied subsidy from Anthropic to my account is on the order of 9×. They’re losing money on me. They’re losing money on every heavy user. The only way the math closes is if the average user is ~10× lighter than I am, OR they reprice, OR they cap.

OpenAI is in the same situation with Codex Pro. The economics of AI inference are not subtle: it costs real GPU-hours to generate tokens, and a $200 monthly fee buys you a fixed dollar of GPU-hours regardless of how the marketing copy reads. The buffet is real. It’s just temporary.

And it’s already shrinking

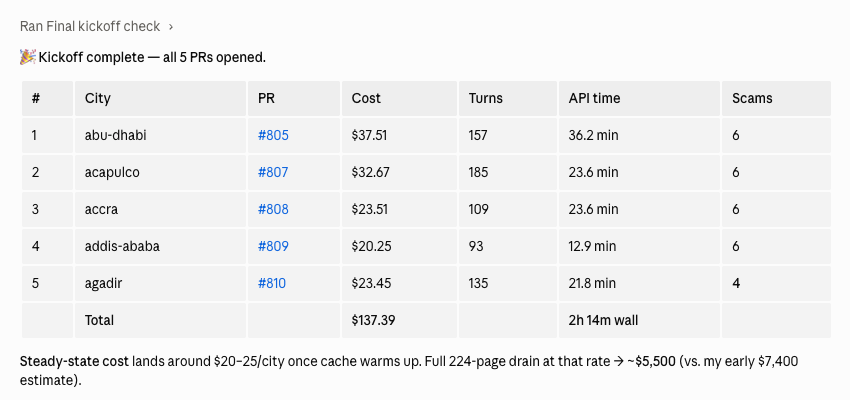

If you think the subsidy ending is years away, look at the dashboard:

The headline reads “All Systems Operational.” The bars tell a different story. Claude Code at 99.22% uptime over 90 days is roughly 16 hours of degraded or down service per quarter. Claude.ai at 98.75% is 27 hours. Read each yellow or red bar as a day where my recurring crons either failed silently, retried into rate-limit walls, or hit timeouts and burned my routine quota anyway.

That’s the tail end of the same supply story as the cost math: too much demand, finite GPU capacity, the only knobs are price (subsidies tightening), volume (the 15-run cap I just hit), or quality (degradations). All three are in motion right now.

And there’s the signal nobody documents: peak-hour token burn. The same scheduled routine that runs cleanly at 2 AM costs noticeably more in tokens during US morning business hours, when the platform is most loaded. There’s no banner, no dashboard line item, no warning email — but if you watch per-task usage on heavy crons, you can see it. The buffet runs implicit surge pricing at lunch, and you only notice when your daily quota burns 30% faster than it did yesterday.

And separate from any of that, there’s the model-deprecation risk: Anthropic can sunset a model I’ve been depending on with 60 days of notice. If my pipeline is glued to a specific Claude version, that’s a forced migration on someone else’s timeline.

21 models, 5 providers, one Mac mini

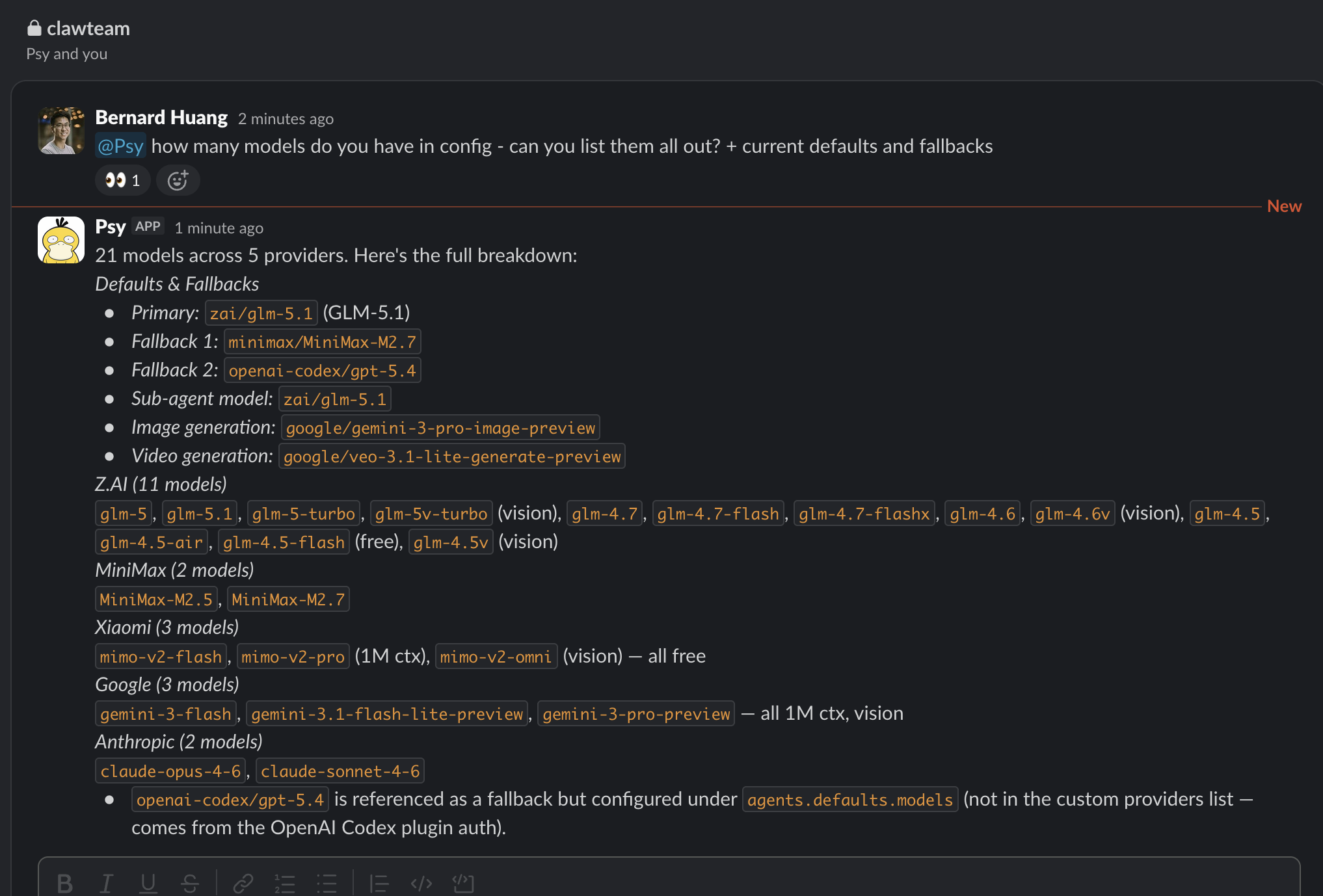

This is what the alternative looks like. OpenClaw is the open-source agentic harness we run for tabiji’s recurring infrastructure — the bit you’d call Claude Code if you were locked into Anthropic. Psy — our OpenClaw agent — posts its current model config into our team Slack:

The defaults: Z.AI’s GLM-5.1 as primary. MiniMax-M2.7 as first fallback. GPT-5.4 via the Codex plugin as second fallback. Xiaomi’s mimo-v2-flash and v2-pro available with 1M-token context, free. Google Gemini 3 Pro for image and video generation. Anthropic still in the rotation as claude-opus-4-6 and claude-sonnet-4-6, called when they’re the right tool, not because they’re the only tool.

When GLM rate-limits, OpenClaw fails over to MiniMax. When MiniMax has a degradation window, it falls through to GPT-5.4. The recurring crons keep running. No 2:36 AM email.

And it runs on that. A $600 Mac mini in a room. Not a hyperscaler in Virginia, not a multi-region replicated cloud setup — a small silver box on a desk, routing requests to whichever provider has capacity. The whole architecture is pages of YAML and a router script.

My dual-stack reality

I want to be honest about what I actually do, because the headline is a thesis but day-to-day is a compromise.

I still pay for three Claude Code Max plans. I use Claude Code every single day. For one-off tasks — interactive coding, exploration, audits, drafting (this very post), debugging weird production issues at 11 PM — nothing else feels as good. The model quality is excellent, the harness is mature, the tool calls are fast. I wrote about where the model takes shortcuts at scale precisely because I push it hard enough to find the edges.

What lives on OpenClaw is anything recurring. Scheduled content runs. Nightly audits. Multi-page sweeps. The kind of work that I want to fire-and-forget on a cron, where a 2:36 AM “your routines are paused” email is unacceptable. That work cannot live on a single vendor with a daily cap, because it doesn’t care about my timezone.

That’s the dual stack: Claude Code for the 9 AM “help me figure this out” loop, OpenClaw for the cron that runs at 3 AM whether anyone’s watching or not.

The hedge thesis

Choosing OpenClaw isn’t about Anthropic being bad — they’re not, the product is the best in its class. It’s about not betting your business on someone else’s pricing model holding.

Freedom in this context = optionality. The Max plan gives you one model and a daily limit. OpenClaw gives you 21 options and a circuit breaker. When Anthropic tightens — whether by pricing, capping, or deprecating — the cost to me is reading a config file and changing one default. The cost to a single-vendor shop is rebuilding their pipeline on a forced timeline.

The product is excellent. The price terms are subsidized. The two are different bets, and only one of them is yours to control. Keep the recurring work portable, use Claude Code where it sings, and when the buffet closes, walk out with a plate.

Newsletter

Get the next post by email.

One email when I publish something new. No spam, no fixed schedule, unsubscribe anytime.

Recommended Reading

- OpenClaw Is an MMORPG

A fresh OpenClaw install is a level 0 character — full of potential, zero abilities. Every API key you add, every skill you install is a talent point.

- The Day Claude Banned OpenClaw

OpenClaw banned Claude and Anthropic on Saturday. The community scrambled. We tested GPT 5.4, GLM 5.1, MiniMax M2.7, MIMO, and Qwen 3.6 — and landed on a…

- Build for Agents, Price Per Call.

Hermes + Codex 5.5 matched Opus-era smoothness — we one-shot a new product (veracityapi.com) in an afternoon. But the tooling unlock isn't the moat.